N1_outp ← (1.96/target_margin_error_outp)^2 * p_outp * (1 - p_outp) For hospital admission or worse N1_any ← (1.96/target_margin_error_any)^2 * p_any * (1 - p_any) For outpatient visit or worse Target_margin_error_icu ← 0.005 # lower target as the proportion is very low For any outcome Target_margin_error_hosp ← 0.01 # lower target as the proportion is low

Targeted margin of error for estimating the overall risk of the outcome

We see that for step 3 and 4, the estimated minimal sample size is almost an order of magnitude smaller of the proportion experiencing any of the three events are used compared to largest estimate produced by looking at any single outcome: Step 1: What sample size will produce a precise estimate of the overall outcome risk or mean outcome value? The output of the estimated minimal required sample size is shown at the bottom for each of the four steps. These are the calculations if this helps to explain my concerns. either experiencing no outcome, or any one of the three outcomes).ĭoes this intuition make sense? Do you know of any papers that discuss this? For this assumption to be incorrect, the power of the ordinal logistical model would have to be less than a logistic model that was based on a dichotomisation of the same outcomes (i.e. This is of course not the case, I have plenty information contained within the other more common outcomes and intend to “exploit” the proportional odds assumption to “borrow” information from them.īecause the coefficients are estimated using all events, it seems intuitive to me that the minimal sample size calculated in steps 3 and 4 should be based on the overall proportion of individuals who experience at least one of the three possible outcomes. This is because the calculation assumes I am dichotomously trying to predict this rare outcome alone and assumes I have only this rare information to estimate the coefficients. Because one of my ordinal outcomes is rare, these steps produce a ridiculously high minimal sample size, upwards of 150000 patients. However, I run into problems in steps 3 (What sample size will produce predicted values that have a small mean error across all individuals?) and 4 (What sample size will produce a small optimism in apparent model fit?). I was then going to target the highest minimal sample size obtained from calculating any one of the four steps on any of the three outcomes, my thinking being that a ordinal model will be at least as powerful as a dichotomous logistic model. My simple approach was to follow steps 1-4 for a binary outcome model and calculate the required minimal sample size for each of the three outcomes, for each step. I was therefore going to adhere to the guidance discussed in the paper you linked, and also summarised here: I am not aware of any guidance or methodological papers that specifically address how to do this for an ordinal model. Cardiometry Issue 25 December 2022 p.1731-1737 DOI: 10.18137/cardiometry.I have a question for you and have been tasked with calculating the minimum required sample size for a multivariable ordinal logistic model with three outcomes.

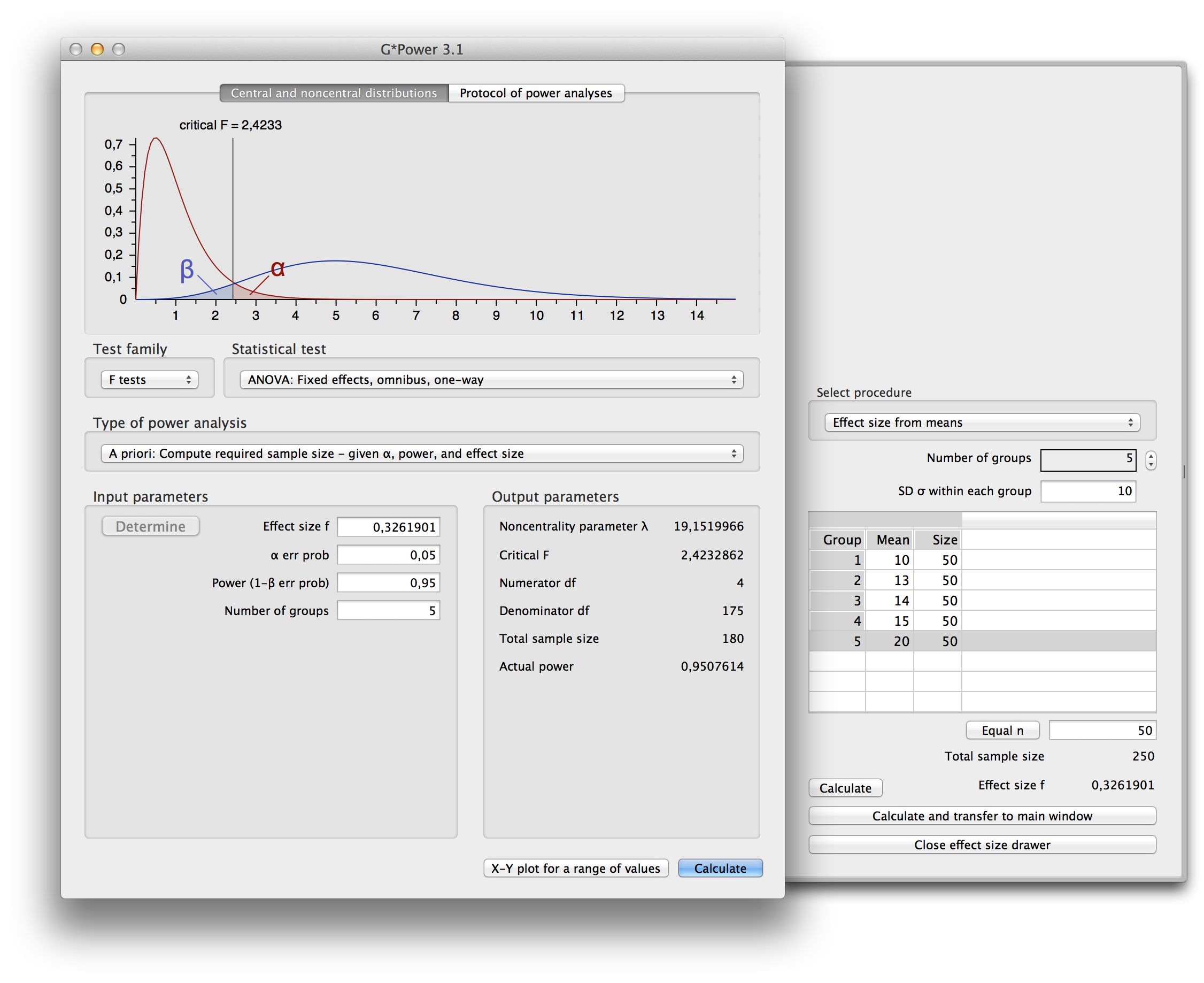

Heart Disease Prediction Based on Age Detection Using Logistic Regression over Random Forest. It can be also considered a better option for Heart Disease Prediction. Conclusion: The Logistic Regression model is significantly better than the Random Forest in Heart Disease Prediction. The statistical significance difference is 0.01 (p<0.05). Results: The Logistic Regression achieved improved accuracy of 91.60 then the Random Forest in Heart Disease Prediction. Each group consists of a sample size of 10 and the study parameters include alpha value 0.01, beta value 0.2, and the Gpower value of 0.8. Materials and Methods: This study contains 2 groups i.e Logistic Regression and Random Forest. Aim: To improve the accuracy in Heart Disease Prediction using Logistic Regression and Random Forest.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed